Curious about deploying LLMs? Our VP of Customer Experience, Jeff Geiser, put together this quick walkthrough on running Llama 8B on a single RTX 4090, then scaling to a hybrid setup across regions.

- Products

Distributed Inference

Deploy and scale AI anywhere

Fabric for AI

High-speed network for AI connectivity

AI Gateway

One-stop access to global models

Bare Metal

On-demand dedicated servers

Elastic Compute

Scalable virtual servers

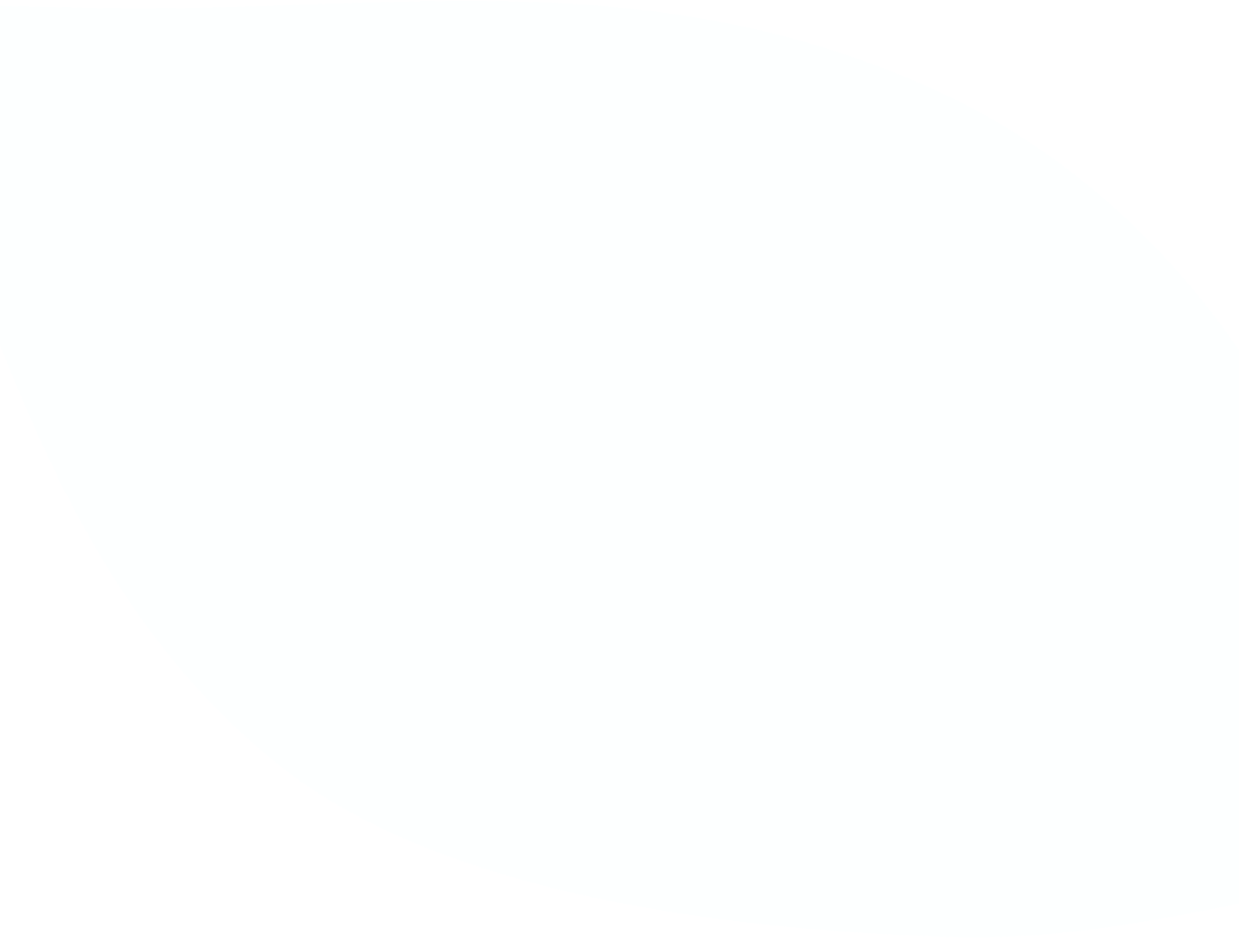

Cloud Connect

Onramp to public clouds

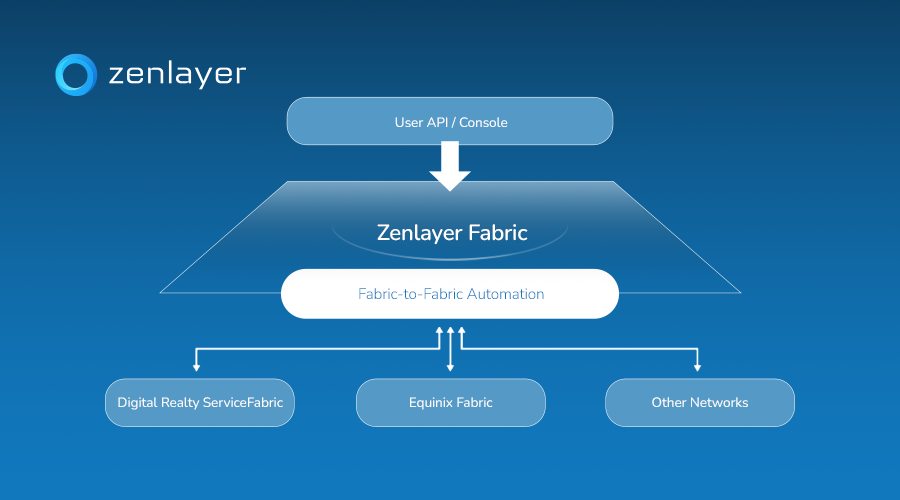

Cloud Router

Layer 3 mesh network

Private Connect

Deploy and scale AI anywhere

Virtual Edge

Virtual access gateway to private backbone

Acceleration

Global Accelerator

Improve application performance

CDN

Global content delivery network

IP Transit

IP Transit

High-performance internet access

Edge Colocation

Colocation close to end users

Custom AI Services

Global AI infrastructure deployments

ComputeNetworkingAcceleration

Connectivity

Services - Solutions

Blockchain

Power global blockchain nodes with ultra-low latency

CDN & Cloud

Expand rapidly worldwide while improving performance

Gaming

Ensure stable real-time gameplay everywhere

Multi-cloud Connectivity

Connect seamlessly across public and private clouds

AWS Direct Connect: US-China

Ensure fast, stable cross-region AWS Direct Connect

AI Infrastructure

Power real-time AI with edge compute and connectivity

- Global Network

- Partners

- Resources

LibraryCompanyLibraryCompanyLibraryCompany

- Sign in

- Get started